The US-Israel war on Iran has been described as “the first AI war”. But recent deployments of artificial intelligence are, in fact, the latest in a long history of technological developments that prize a need for speed in the military “kill chain”.

“Sixty seconds – that’s all it took,” claimed a former Israeli Mossad agent of the strikes that killed Iran’s supreme leader, Ayatollah Ali Khamenei, on February 28 2026, the first day of the US-Israel war on Iran.

The speed and scale of war have been significantly enhanced by use of AI systems. But this need for speed brings serious risks for civilians and military combatants alike.

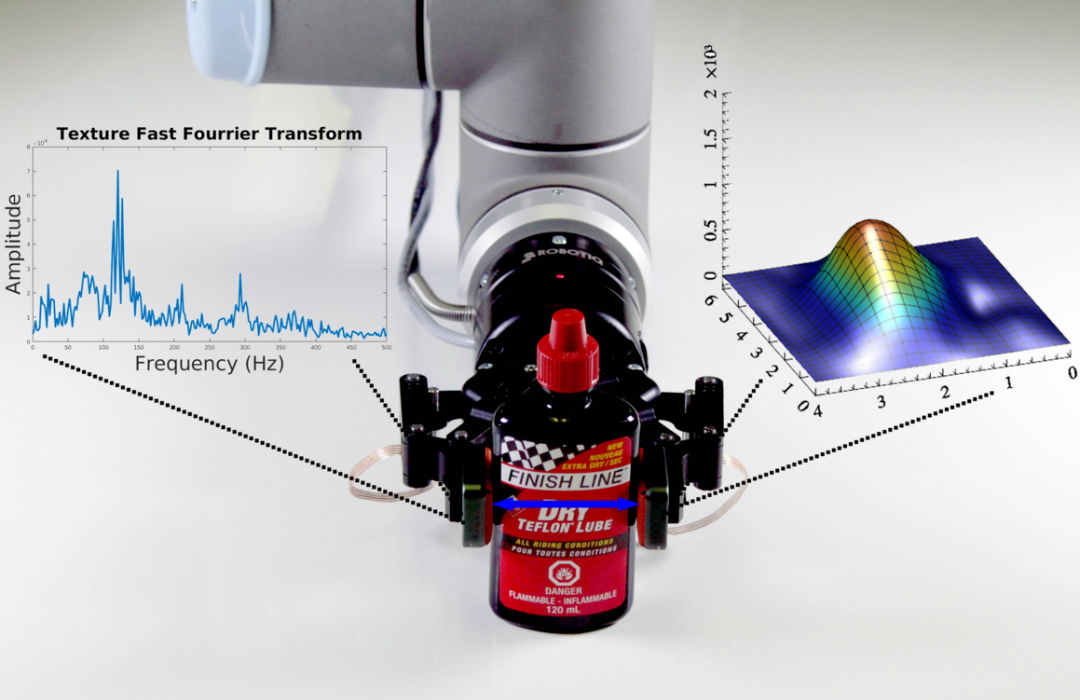

Modern military operations produce and rely on an enormous amount of intelligence. This includes intercepted phone calls and text messages, the mass surveillance of the internet (known as “signals intelligence”), as well as satellite imagery and video feeds from loitering drones. We can think of all this intelligence as data – and the problem is, there’s too much of it.

As early as 2010, the US Air Force was concerned about “swimming in sensors and drowning in data”. Too many hours of footage, and too many analysts manually reviewing this intelligence.

AI systems can dramatically speed up the analysis of military intelligence. Brad Cooper, head of US Central Command (CentCom), recently confirmed the use of AI tools in the war against Iran, saying:

These systems help us sift through vast amounts of data in seconds, so our leaders can cut through the noise and make smarter decisions faster than the enemy can react … Advanced AI tools can turn processes that used to take hours and sometimes even days into seconds.

In 2024, an investigation by Georgetown University found that the US Army’s 18th Airborne Corps had employed AI to assist with intelligence processing – reducing a team of 2,000 to just 20.

The allure of speed

In the second world war, the aerial targeting cycle – from collecting images to assembling target packages complete with intelligence reports – could take weeks or even months. But over the ensuing decades, the US military set about what it called “compressing the kill chain” – shortening the time between the identification of a target and use of force against it.

During the first Gulf war of 1991, Iraq’s president Saddam Hussein made use of mobile missile launchers that would roam the desert firing Scud missiles. By the time US radar identified its location, the launcher could be miles away. This “shoot and scoot” tactic required new technology to track these mobile targets.

A key breakthrough came shortly after the September 11 attacks in the form of an armed Predator drone.

In November 2002, the CIA targeted and killed Al Qaeda’s leader in Yemen, Qaed Salim Sinan al-Harithi. This heralded a new era of warfare in which drones piloted from military bases in the US flew remotely over the skies of Yemen, Somalia, Pakistan, Iraq, Afghanistan and elsewhere.

The drones’ powerful cameras could take high-resolution video and beam it back to the US via satellite in a matter of seconds, enabling the drone operators to track mobile targets. The same drone which had eyes on the target could fire missiles to kill or destroy the target.

With greater speed comes greater risk

Two decades ago, it was easy to dismiss as hyperbole the idea that the coming age of cyberwarfare might bring about “bombing at the speed of thought”, a phrase coined by American historian Nick Cullather in 2003. Yet with the advent of AI warfare, the unthinkable has become almost antiquated.

Part of the push to employ AI tools is the sense that human thought is no match for the processing speeds enabled by AI systems. The US Department of Defense’s artificial intelligence strategy states: “Military AI is going to be a race for the foreseeable future, and therefore speed wins … We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment.”

While the precise uses of AI by US and other military is shrouded in secrecy, information has been made public that highlights the risks of its use on civilian populations.

In Gaza, according to Israeli intelligence sources, the AI systems Lavender and Gospel have been programmed to accept up to 100 civilian casualties (and occasionally even more) for a strike on a single suspected Hamas combatant. More than 75,000 people are estimated to have been killed there since October 7 2023.

In February 2024, a US airstrike killed a 20-year-old student, Abdul-Rahman al-Rawi. At the time, a senior US official admitted the strikes had used AI targeting – although confusingly, the US military now says it has “no way of knowing” whether it used AI in specific airstrikes.

The risk is that AI could lower the threshold or cost of going to war, as people play an increasingly passive role in reviewing and rubber-stamping the work of AI.

The embedding of AI into military kill chains intersects with other alarming developments. After years of inaction, the US military spent more than a decade developing an infrastructure to avoid civilian casualties in war, but it has been almost totally dismantled under the Trump administration.

The lawyers who give advice to the military on targeting operations, including compliance with international law and rules of engagement, have been sidelined and fired.

Meanwhile, since the start of the war in Iran, more than 1,200 civilians have been killed, according to the Iranian Health Ministry. On February 28, the US military struck an elementary school in the south of Iran, killing at least 175 people, most of them children.

The US secretary of defense, Pete Hegseth, has been clear that the military’s aim in Iran is for “maximum lethality, not tepid legality. Violent effect, not politically correct”.

With such an attitude, and by privileging speed over deliberation, civilian casualties become inevitable, and accountability ever more elusive.

The post “Iran war shows how AI speeds up military ‘kill chains’” by Craig Jones, Senior Lecturer in Political Geography, Department of Geography, Newcastle University was published on 03/17/2026 by theconversation.com