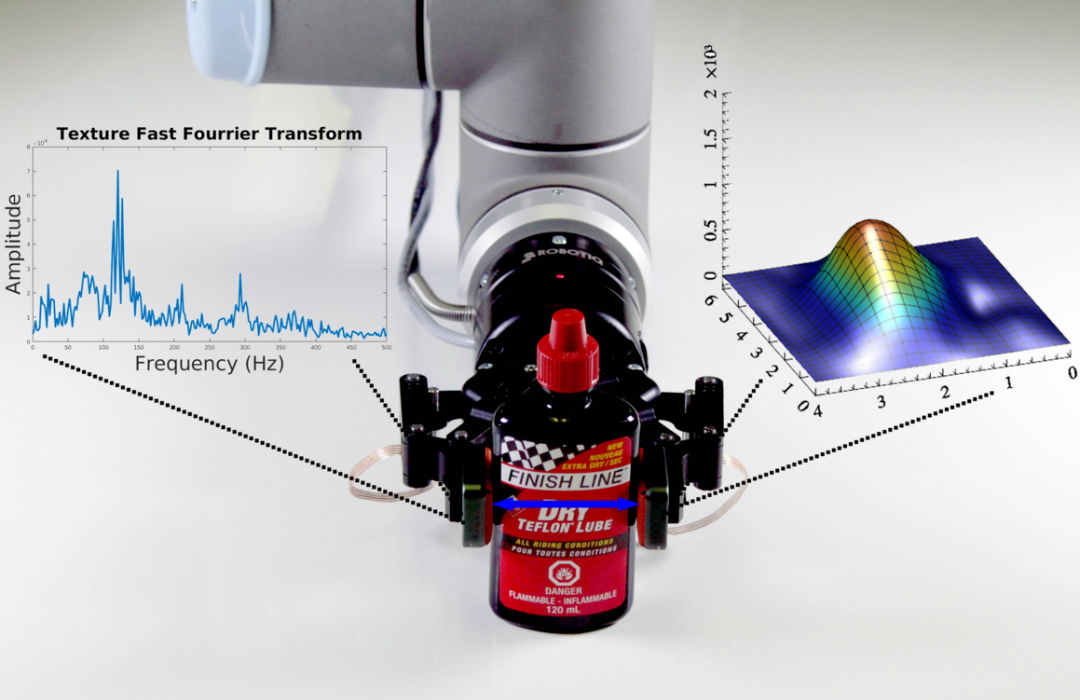

Vision-language-action models are the current state of the art in robotic manipulation. They still cannot pick up a potato chip without crushing it.

That is the result published earlier this year by the team behind the Video Tactile Action Model (VTAM). On a potato chip pick-and-place task — a task that demands high-fidelity force awareness, where vision alone cannot distinguish a crushing grasp from a holding one — VTAM outperformed the π0.5 baseline by 80%. Across the broader contact-rich benchmark suite, VTAM held a 90% average success rate.¹

The chip is an adversarial example, and that is precisely why it is the right test. At the point of grasp, only contact dynamics carry useful signals. Pressure, vibration, and force/torque tell the policy what is happening, correcting the visual estimation errors that vision-only models cannot detect on their own. A camera, however high its resolution, cannot do that work.

Tactile is not plug-and-play

Tactile sensors do not improve model performance on their own. Most learning pipelines today are built around vision and language; the two modalities with the largest datasets and the most mature architectures behind them. When tactile signals are appended to a vision-first pipeline without intentional design, they tend to get downweighted, drowned out, or lost in training. VTAM works because the architecture forces the model to forecast vision and tactile dynamics together, so the tactile signal directly shapes the learned policy rather than getting absorbed into vision and language. Tactile data only delivers its value when it is intelligently incorporated.

The pattern is now consistent across the literature

The chip is one end of the spectrum, a case where vision fails outright and tactile carries the signal alone. Most real-world tasks sit further along that spectrum, where vision and tactile each contribute and the synergy between them is what drives training efficiency. The pattern is now consistent across the literature.

VTAM is not alone. The ManiSkill-ViTac 2025 benchmark formalises tactile-augmented evaluation across insertion, tool use, and precision assembly tasks. Independent research on tactile sensor configurations and grasp learning efficiency² shows the same lift. Policies that combine vision with tactile feedback consistently outperform vision-only equivalents on contact-rich tasks, and tend to reach the same success threshold from fewer demonstrations.

Failure detection is the second prize

A tactile-conditioned policy registers incipient slip as a vibration signature tens to hundreds of milliseconds before the object actually moves. That window is the difference between re-grasping and a full restart — between 95% and 99% uptime on the same line. Across a fleet, the operational case becomes hard to ignore.

Failure detection is one case of a larger capability: producing accurate, high-resolution labels for what actually happened during the grasp. A binary success/fail label collapses information that the training pipeline could use. Did the grasp succeed cleanly, or did it succeed with internal slippage that the controller recovered from? Did the object settle stably, or did it shift during transport? Tactile sensing can distinguish these cases, and embedded contact perception can label them on-device, turning every episode into a more informative training example, not just the failed ones.

Figure 1. VTAM combines a language model, a predictive vision-tactile world model, and a diffusion-based action policy. From just 10 minutes of teleoperation per task, it learns to predict future actions, states, and forces — enabling contact-rich tasks such as chip pick-and-place, dynamic wiping, and stable peeling. Source: arXiv:2603.23481.

What this means for builders

Tactile sensing has moved from useful addition to defensible requirement for any team aiming at production-grade contact-rich manipulation. The question is no longer whether to instrument. It is whether to instrument now, or pay later in rebuilt datasets and recalibrated models.

VTAM put a real number on the case and other recent work keeps pointing in the same direction. The next generation of foundation models will be built on data that captures contact rather than vision-only.

Ready to take the next step?

Talk to our technical team about tactile integration for your manipulation pipeline and learn more about how Robotiq can enable your application.

¹ Video Tactile Action Model (VTAM), arXiv:2603.23481.

² Representative findings include Tactile Robotics: An Outlook (arXiv) and published work on the impact of tactile sensor configurations on grasp learning efficiency.

![]()

The post “How tactile sensing improves model performance” by Jennifer Kwiatkowski was published on 05/07/2026 by blog.robotiq.com