When German journalist Martin Bernklau typed his name and location into Microsoft’s Copilot to see how his articles would be picked up by the chatbot, the answers horrified him.

Copilot’s results had asserted that Bernklau was an escapee from a psychiatric institution, a convicted child abuser and a conman preying on widowers. For years, Bernklau had served as a court reporter and the artificial intelligence (AI) chatbot had falsely blamed him for the crimes he had covered.

The accusations against Bernklau are not true, of course, and are examples of generative AI “hallucinations”. These are inaccurate or nonsensical responses to a prompt provided by the user and are alarmingly common with this technology. Anyone attempting to use AI should always proceed with great caution, because information from such systems needs validation and verification by humans before it can be trusted.

But why did Copilot hallucinate these terrible and false accusations?

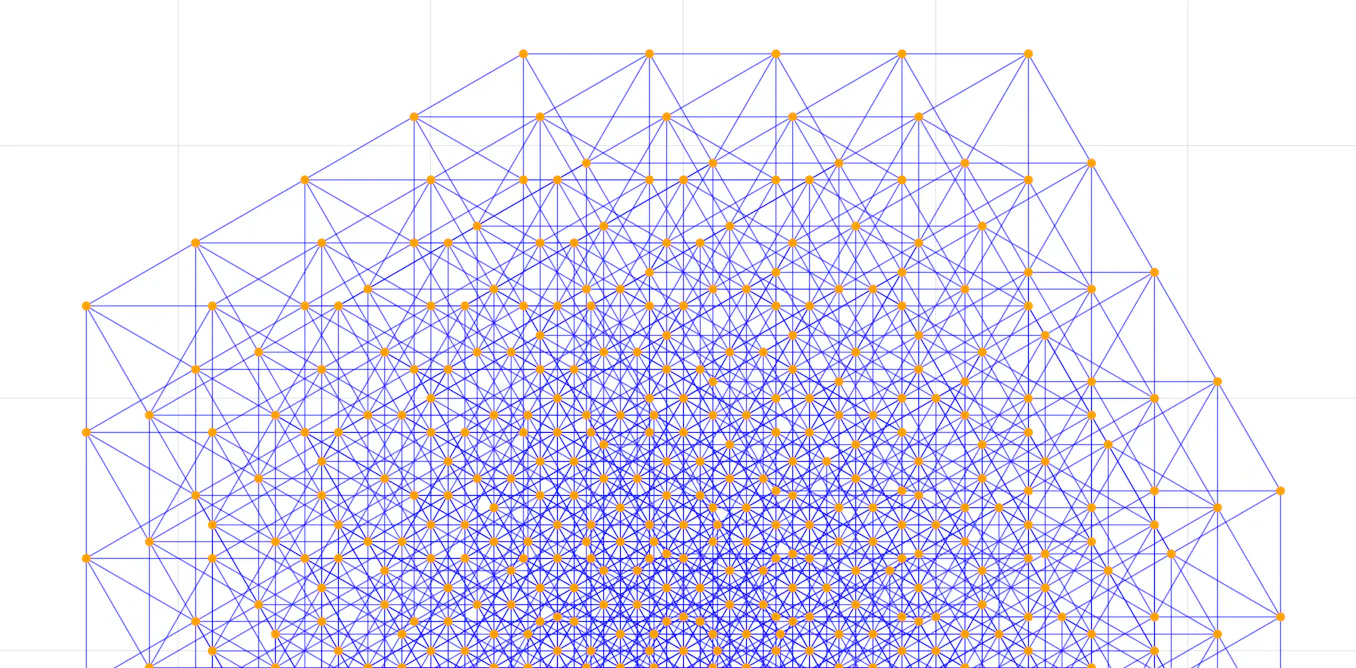

Copilot and other generative AI systems like ChatGPT and Google Gemini are large language models (LLMs). The underlying information processing system in LLMs is known as a “deep learning neural network”, which uses a large amount of human language to “train” its algorithm.

From the training data, the algorithm learns the statistical relationship between different words and how likely certain words are to appear together in a text. This allows the LLM to predict the most likely response based on calculated probabilities. LLMs do not possess actual knowledge.

The data used to train Copilot and other LLMs is vast. While the exact details of the size and composition of the Copilot or ChatGPT corpora are not publicly disclosed, Copilot incorporates the entire ChatGPT corpus plus Microsoft’s own specific additional articles. The predecessors of ChatGPT4 – ChatGPT3 and 3.5 – are known to have used “hundreds of billions of words”.

Copilot is based on ChatGPT4 which uses a “larger” corpus than ChatGPT3 or 3.5. While we don’t know how many words this is exactly, jumps between different versions of ChatGPT tend to be orders of magnitude greater. We also know that the corpus includes books, academic journals and news articles. And herein lies the reason that Copilot hallucinated that Bernklau was responsible for heinous crimes.

Bernklau had regularly reported on criminal trials of abuse, violence and fraud, which were published in national and international newspapers. His articles must presumably have been included in the language corpus which uses specific words relating to the nature of the cases.

Since Bernklau spent years reporting in court, when Copilot is asked about him, the most probable words associated with his name relate to the crimes he has covered as a reporter. This is not the only case of its kind and we will probably see more in years to come.

Tada Images/Shutterstock

In 2023, US talk radio host Mark Walters successfully sued OpenAI, the company which owns ChatGPT. Walters hosts a show called Armed American Radio, which explores and promotes gun ownership rights in the US.

The LLM had hallucinated that Walters had been sued by the Second Amendment Foundation (SAF), a US organisation that supports gun rights, for defrauding and embezzling funds. This was after a journalist queried ChatGPT about a real and ongoing legal case concerning the SAF and the Washington state attorney general.

Walters had never worked for SAF and was not involved in the case between SAF and Washington state in any way. But because the foundation has similar objectives to Walters’ show, one can deduce that the text content in the language corpus built up a statistical correlation between Walters and the SAF which caused the hallucination.

Corrections

Correcting these issues across the entire language corpus is nearly impossible. Every single article, sentence and word included in the corpus would need to be scrutinised to identify and remove biased language. Given the scale of the dataset, this is impractical.

The hallucinations that falsely associate people with crimes, such as in Bernklau’s case, are even harder to detect and address. To permanently fix the issue, Copilot would need to remove Bernklau’s name as author of the articles to break the connection.

Read more:

AI can now attend a meeting and write code for you – here’s why you should be cautious

To address the problem, Microsoft has engineered an automatic response that is given when a user prompts Copilot about Bernklau’s case. The response details the hallucination and clarifies that Bernklau is not guilty of any of the accusations. Microsoft has said that it continuously incorporates user feedback and rolls out updates to improve its responses and provide a positive experience.

There are probably many more similar examples that are yet to be discovered. It becomes impractical to try and address every lone issue. Hallucinations are an unavoidable byproduct of how the underlying LLM algorithm works.

As users of these systems, the only way for us to know that output is trustworthy is to interrogate it for validity using some established methods. This could include finding three independent sources that agree with assertions made by the LLM before accepting the output as correct, as my own research has shown.

For the companies that own these tools, like Microsoft or OpenAI, there is no real proactive strategy that can be taken to avoid these issues. All they can really do is to react to the discovery of similar hallucinations.

The post “Why Microsoft’s Copilot AI falsely accused court reporter of crimes he covered” by Simon Thorne, Senior Lecturer in Computing and Information Systems, Cardiff Metropolitan University was published on 09/19/2024 by theconversation.com