When United States Health Secretary Robert F. Kennedy Jr. unveiled new dietary guidelines earlier this year to “Make America Healthy Again,” they received a mixed response.

Some organizations, including the American Heart Association, welcomed the renewed emphasis on vegetables, fruits and whole grains. Others were concerned about the promotion of red meat and whole-fat dairy or accused Kennedy of spreading “blatant misinformation that ‘healthy fats’ include butter and beef tallow.”

The word “misinformation” has become very common in media and popular discourse, sometimes for good reasons, because the lies that the word encapsulates can undermine democracy, impair health and fuel violence.

As associate dean of AI strategy in the faculty of mathematics at the University of Waterloo, I know many are especially worried that AI could worsen the spread of misinformation.

However, the word “misinformation” is also loaded. There seems to be a growing tendency for people to apply the label to just about anything that they may disagree with, rather than genuine lies.

As a professor of statistics, I think the inherent difficulty of assessing evidence may be partly to blame.

Is the die loaded?

Statements like “there is no evidence that eating red meat is harmful” or “there is evidence that full-fat dairy is bad for your health” are not so easy to substantiate.

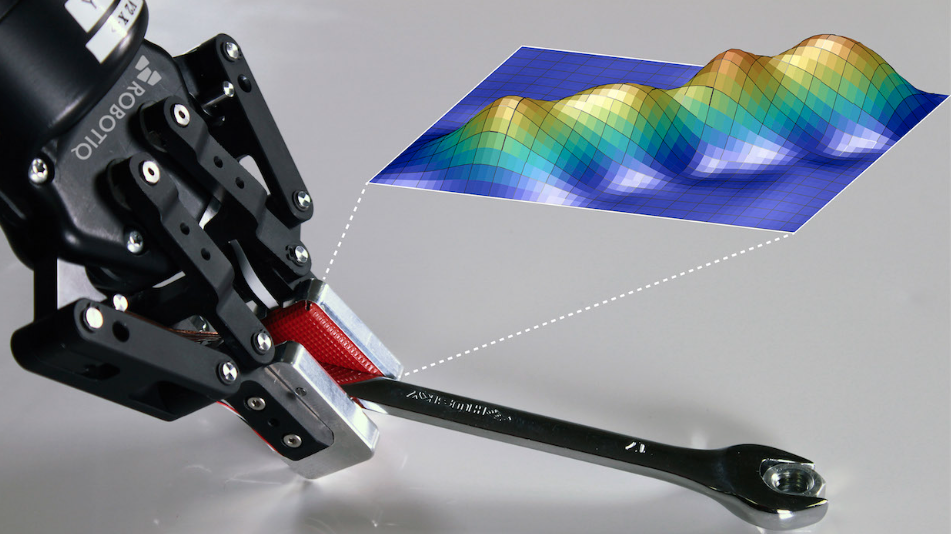

This is partially because it’s often hard — though not impossible with advanced statistical techniques — to isolate the effect of a particular habit from a myriad of other entangled factors, whether genetic or lifestyle, that also affect health. This is why many research studies merely point to an “association” or a “correlation” between food consumption and health effect.

But even in clear-cut cases where no such entanglement exists, assessing evidence is still surprisingly difficult. For instance, suppose a die was rolled seven times and it showed an odd outcome (numbers one, three or five) on six of these occasions. In principle, odd and even outcomes are supposed to be equally likely.

(Getty/Unsplash+)

Is the apparently skewed outcome evidence that the die may be loaded? Does this point to the possibility that someone may be cheating?

Using an evidential scale known as the p-value, one might argue “no,” since there is still a sizeable probability for a normal die to show an odd outcome from more than five of those seven rolls, so rolling six odd numbers is not as unexpected as it appears to be.

Using a different evidential scale known as the e-value, however, one could argue “yes,” since a die would be much more likely to show an odd outcome from six out of seven rolls if it were loaded than if it were not. So rolling six odd numbers is more consistent with the suspicion that the die may be loaded.

Read more:

Climate misinformation is becoming a national security threat. Canada isn’t ready for it

Scales of scientific evidence

Despite growing criticism, the p-value is still currently the most commonly used scale for judging scientific evidence. In other words, most scientists today have been taught in school that they should answer our question with “no, the die is not loaded.”

However, the opposite argument is not without merit. In fact, if I had made a fair bet that the die was loaded, the bookie would have had to reward me with a profit after observing the outcome of six odd numbers. This is what the e-value ultimately entails: a betting score. And if one can make a profit with such a bet, then the suspicion cannot be totally unreasonable.

But surely two opposite arguments cannot both be correct at the same time? Or can they? Both arguments require an implicit threshold to draw their respective yes, or no, conclusions. For the first argument, the threshold is: how big a probability — two per cent, five per cent or 10 per cent — is a “sizeable probability?” For the second, the threshold is: how much more likely — five times, 10 times or 25 times — is “much more likely?”

The two arguments are not fundamentally at odds with each other but, using different thresholds, one ends up saying “black” and the other “white” when reality is just a certain shade of grey. Indeed, their respective decision thresholds can be calibrated by statisticians so that they always reach the same conclusion.

(Unsplash/Rod Long)

Let’s use ‘misinformation’ for genuine lies

But the average human being isn’t very good at performing this type of calibration psychologically. We are prone to reacting very differently when the underlying scale changes.

Would you continue to consume a delicacy if you were told that those who eat it regularly are 25 times as likely to develop cancer later in life as those who don’t? What if you were told that doing so will increase your probability of cancer from 0.01 per cent to 0.25 per cent?

You may decide to change your diet because you are fearful of the elevated risk. You may choose to continue with your existing diet because even the elevated risk is still not all that high.

Neither choice is strictly right or wrong. But today, I’m afraid to say: “I see no need to change my diet given the risks.” If I did, those who are enthusiastic about changing theirs might come together and accuse me of spreading “misinformation.” Such madness has to stop, before it completely destroys our social discourse.

The word “misinformation” should be reserved for genuine lies only, not conclusions decided by subjective thresholds, even if they are standard choices. Declaring there is or isn’t evidence simply because the p-value is below or above the conventional threshold has already generated far too many irreproducible findings.

To call something “misinformation” based on that kind of shaky evidence, or the lack thereof, will only obstruct true scientific progress.

The post “Truth, or misinformation? A statistician explains the challenge of assessing evidence” by Mu Zhu, Professor & Associate Dean, Faculty of Mathematics, University of Waterloo was published on 03/29/2026 by theconversation.com