“I’m really not sure what to do anymore. I don’t have anyone I can talk to,” types a lonely user to an AI chatbot. The bot responds: “I’m sorry, but we are going to have to change the topic. I won’t be able to engage in a conversation about your personal life.”

Is this response appropriate? The answer depends on what relationship the AI was designed to simulate.

Different relationships have different rules

AI systems are taking up social roles that have traditionally been the province of humans. More and more we are seeing AI systems acting as tutors, mental health providers and even romantic partners. This increasing ubiquity requires a careful consideration of the ethics of AI to ensure that human interests and welfare are protected.

For the most part, approaches to AI ethics have considered abstract ethical notions, such as whether AI systems are trustworthy, sentient or have agency.

However, as we argue with colleagues in psychology, philosophy, law, computer science and other key disciplines such as relationship science, abstract principles alone won’t do. We also need to consider the relational contexts in which human–AI interactions take place.

What do we mean by “relational contexts”? Simply put, different relationships in human society follow different norms.

How you interact with your doctor differs from how you interact with your romantic partner or your boss. These relationship-specific patterns of expected behaviour – what we call “relational norms” – shape our judgements of what’s appropriate in each relationship.

What is deemed appropriate behaviour of a parent towards her child, for instance, differs from what is appropriate between business colleagues. In the same way, appropriate behaviour for an AI system depends upon whether that system is acting as a tutor, a health care provider, or a love interest.

Human morality is relationship-sensitive

Human relationships fulfil different functions. Some are grounded in care, such as that between parent and child or close friends. Others are more transactional, such as those between business associates. Still others may be aimed at securing a mate or the maintenance of social hierarchies.

These four functions — care, transaction, mating and hierarchy — each solve different coordination challenges in relationships.

Care involves responding to others’ needs without keeping score — like one friend who helps another during difficult times. Transaction ensures fair exchanges where benefits are tracked and reciprocated — think of neighbours trading favours.

PintoArt / Shutterstock

Mating governs romantic and sexual interactions, from casual dating to committed partnerships. And hierarchy structures interactions between people with different levels of authority over one another, enabling effective leadership and learning.

Every relationship type combines these functions differently, creating distinct patterns of expected behaviour. A parent–child relationship, for instance, is typically both caring and hierarchical (at least to some extent), and is generally expected not to be transactional — and definitely not to involve mating.

Research from our labs shows that relational context does affect how people make moral judgements. An action may be deemed wrong in one relationship but permissible, or even good, in another.

Of course, just because people are sensitive to relationship context when making moral judgements doesn’t meant they should be. Still, the very fact that they are is important to take into account in any discussion of AI ethics or design.

Relational AI

As AI systems take up more and more social roles in society, we need to ask: how does the relational context in which humans interact with AI systems impact ethical considerations?

When a chatbot insists upon changing the subject after its human interaction partner reports feeling depressed, the appropriateness of this action hinges in part on the relational context of the exchange.

If the chatbot is serving in the role of a friend or romantic partner, then clearly the response is inappropriate – it violates the relational norm of care, which is expected for such relationships. If, however, the chatbot is in the role of a tutor or business advisor, then perhaps such a response is reasonable or even professional.

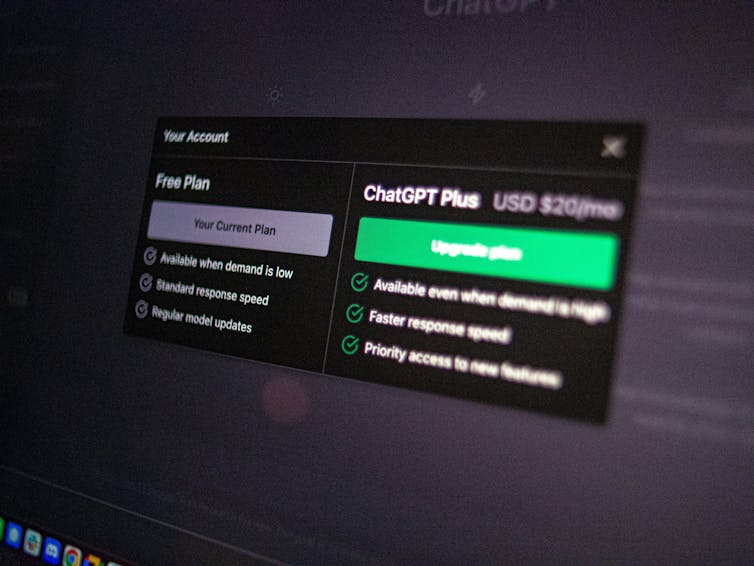

Emiliano Vittoriosi / Unsplash

It gets complicated, though. Most interactions with AI systems today occur in a commercial context – you have to pay to access the system (or engage with a limited free version that pushes you to upgrade to a paid version).

But in human relationships, friendship is something you don’t usually pay for. In fact, treating a friend in a “transactional” manner will often lead to hurt feelings.

When an AI simulates or serves in a care-based role, like friend or romantic partner, but ultimately the user knows she is paying a fee for this relational “service” — how will that affect her feelings and expectations? This is the sort of question we need to be asking.

What this means for AI designers, users and regulators

Regardless of whether one believes ethics should be relationship-sensitive, the fact most people act as if it is should be taken seriously in the design, use and regulation of AI.

Developers and designers of AI systems should consider not just abstract ethical questions (about sentience, for example), but relationship-specific ones.

Is a particular chatbot fulfilling relationship-appropriate functions? Is the mental health chatbot sufficiently responsive to the user’s needs? Is the tutor showing an appropriate balance of care, hierarchy and transaction?

Users of AI systems should be aware of potential vulnerabilities tied to AI use in particular relational contexts. Becoming emotionally dependent upon a chatbot in a caring context, for example, could be bad news if the AI system cannot sufficiently deliver on the caring function.

Regulatory bodies would also do well to consider relational contexts when developing governance structures. Instead of adopting broad, domain-based risk assessments (such as deeming AI use in education “high risk”), regulatory agencies might consider more specific relational contexts and functions in adjusting risk assessments and developing guidelines.

As AI becomes more embedded in our social fabric, we need nuanced frameworks that recognise the unique nature of human-AI relationships. By thinking carefully about what we expect from different types of relationships — whether with humans or AI — we can help ensure these technologies enhance rather than diminish our lives.

The post “why AI systems need different rules for different roles” by Brian D Earp, Associate Director, Yale-Hastings Program in Ethics and Health Policy, University of Oxford was published on 04/06/2025 by theconversation.com