Artificial intelligence has dramatically improved how robots perceive the world.

Computer vision allows robots to detect objects, recognize patterns, and navigate complex environments. Cameras help robots identify parts on a conveyor, locate packages in a bin, and avoid obstacles in warehouses.

But when a robot needs to pick up an object, vision alone is not enough.

To manipulate objects reliably, robots need something humans rely on constantly: touch.

This is where tactile sensing becomes essential.

Vision alone cannot explain contact

Most robotic systems today rely heavily on cameras.

Vision works well for:

- object detection

- pose estimation

- navigation

- scene understanding

But cameras cannot measure physical interaction.

When a robot grips an object, many critical variables appear that cameras cannot observe directly:

- contact force

- pressure distribution

- friction

- slip

- compliance of materials

For example, imagine picking up a wet glass, a soft cloth, or a rigid metal component.

Each requires a different grasp strategy. Humans automatically adjust grip strength based on what we feel. Robots that rely only on vision must infer these properties indirectly, which is much harder.

This limitation explains why manipulation remains one of the biggest challenges in robotics.

Humans rely on touch to manipulate objects

Human hands contain several types of mechanoreceptors that detect different aspects of touch.

These receptors allow us to perceive:

- sustained pressure

- vibration

- skin deformation

- texture

- temperature

Together, these signals help us perform dexterous tasks such as:

- tightening our grip when an object begins to slip

- adjusting finger position during manipulation

- recognizing objects without looking

Robotic systems need similar capabilities to achieve reliable manipulation.

Tactile sensing gives robots the ability to perceive contact dynamics, which is essential for interacting with the physical world.

What tactile sensors allow robots to detect

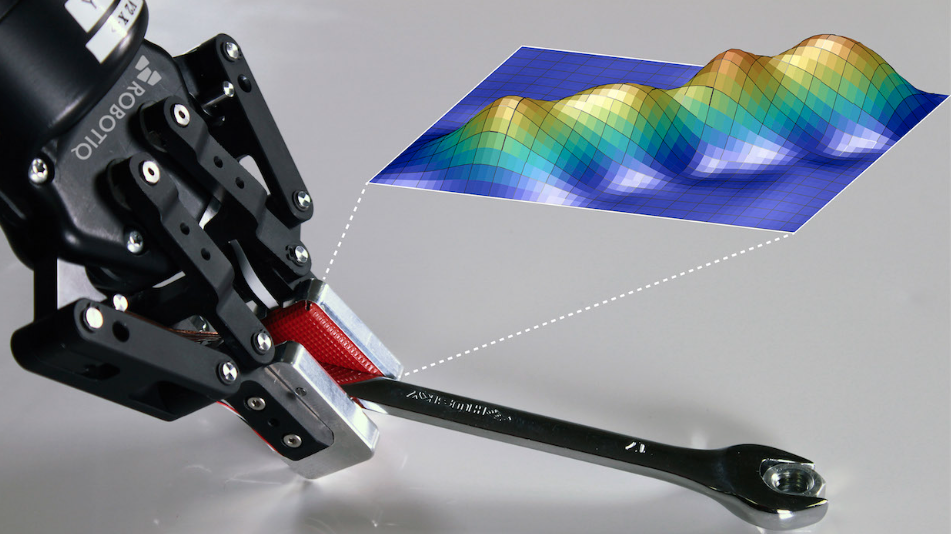

Modern tactile sensing systems can capture several types of information during a grasp.

Key sensing modalities include:

Pressure

Measures the size, shape, and intensity of contact.

Pressure data helps robots determine:

- grasp quality

- object pose in the gripper

- object identity

Vibration

Detects rapid changes in contact.

This is useful for identifying:

- slip events

- collisions

- surface interactions

Proprioception

Measures the configuration of the gripper itself.

This helps robots understand:

- finger positions

- gripper shape

- object deformation during grasping

Together, these signals give robots a much richer understanding of interaction with objects.

What tactile sensing means in robotics

Tactile sensing refers to technologies that allow robots to detect and interpret physical contact with objects.

Unlike vision systems, tactile sensors measure interaction directly at the point of contact.

Common tactile sensing capabilities include:

- pressure detection (contact location and intensity)

- vibration sensing (slip detection)

- force distribution across the gripper

- finger configuration and object deformation

These signals allow robots to adapt their grasp, detect instability, and manipulate objects more reliably.

As robotics moves toward physical AI, tactile sensing is becoming an important complement to vision systems.

Why tactile sensing has been slow to adopt

Although tactile sensing has existed in robotics research for years, adoption in industry has been slower.

Several challenges explain why.

Sensor durability

Many tactile sensors developed in research labs are fragile and not designed for industrial environments.

Manufacturing environments introduce:

- dust

- vibrations

- temperature changes

- continuous operation

Sensors must withstand millions of cycles.

Data interpretation

Tactile signals are complex.

Unlike images, which humans can easily interpret, tactile data is:

- high dimensional

- noisy

- strongly linked to physical mechanics

Understanding what tactile signals mean during manipulation can require sophisticated models and signal processing.

Lack of standard datasets

Another challenge is the lack of large tactile datasets.

Vision systems benefit from billions of images and videos available online. Tactile data, on the other hand, must be collected through real-world interactions, which is much harder to scale.

Why tactile sensing is becoming more important now

Despite these challenges, tactile sensing is becoming increasingly important in robotics.

Several trends are accelerating adoption:

- improved sensor durability

- advances in AI and signal processing

- growing interest in physical AI

- increasing demand for robots that can handle unstructured environments

Robots are no longer limited to repetitive factory tasks. They are being asked to perform more complex manipulation tasks, such as:

- bin picking

- flexible material handling

- assembly operations

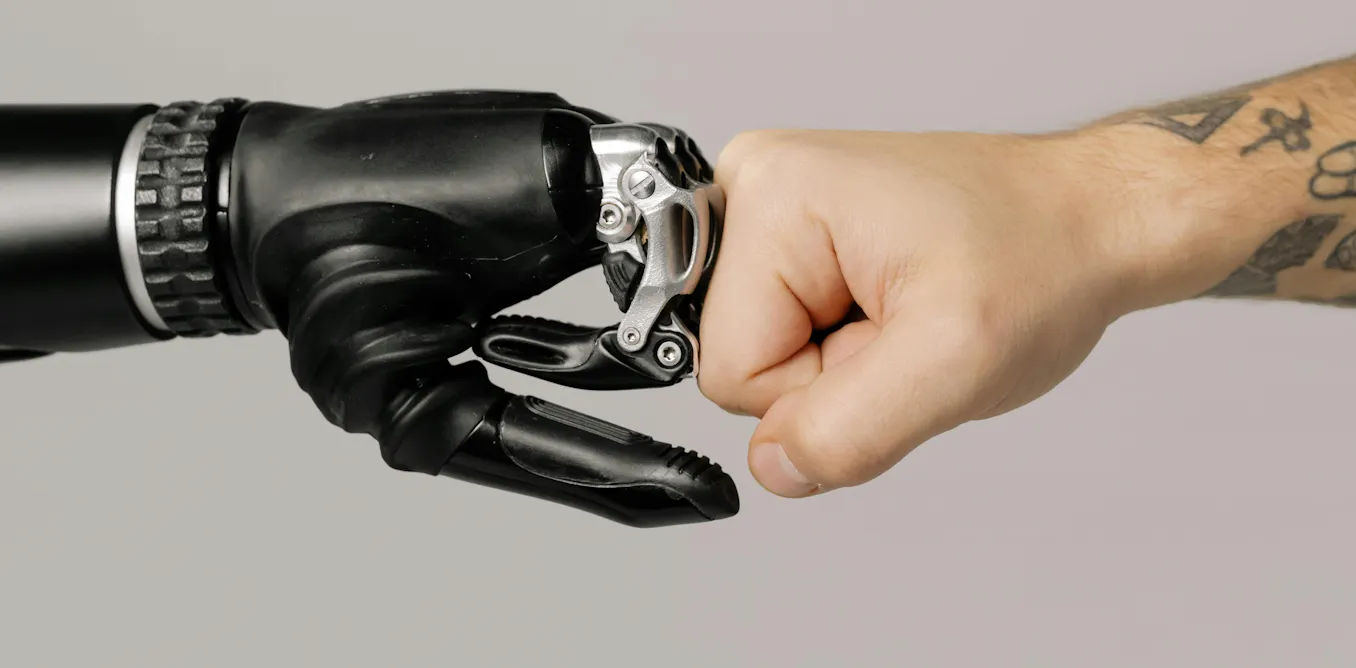

- human–robot collaboration

These tasks require robots to adapt to uncertainty, which makes tactile feedback extremely valuable.

Toward robots that can see and feel

Vision will remain a fundamental sensing modality in robotics.

But the robots that succeed in real-world environments will combine multiple forms of perception.

Future robotic systems will rely on:

- vision for global perception

- tactile sensing for contact understanding

- force sensing for interaction control

Together, these sensing systems allow robots to move beyond simple automation and toward adaptive manipulation.

This combination is one of the key building blocks of physical AI.

Want to explore the future of robotic manipulation?

In our white paper, we explore how sensing, hardware design, and Lean Robotics principles are shaping the next generation of automation.

Explore the full framework behind physical AI

Learn how mechanical design, sensing, and lean robotics principles help turn AI robotics demos into reliable automation systems.

Read the white paper: Giving physical AI a hand

![]()

The post “Robots can see. But they still can't feel.” by Jennifer Kwiatkowski was published on 03/24/2026 by blog.robotiq.com