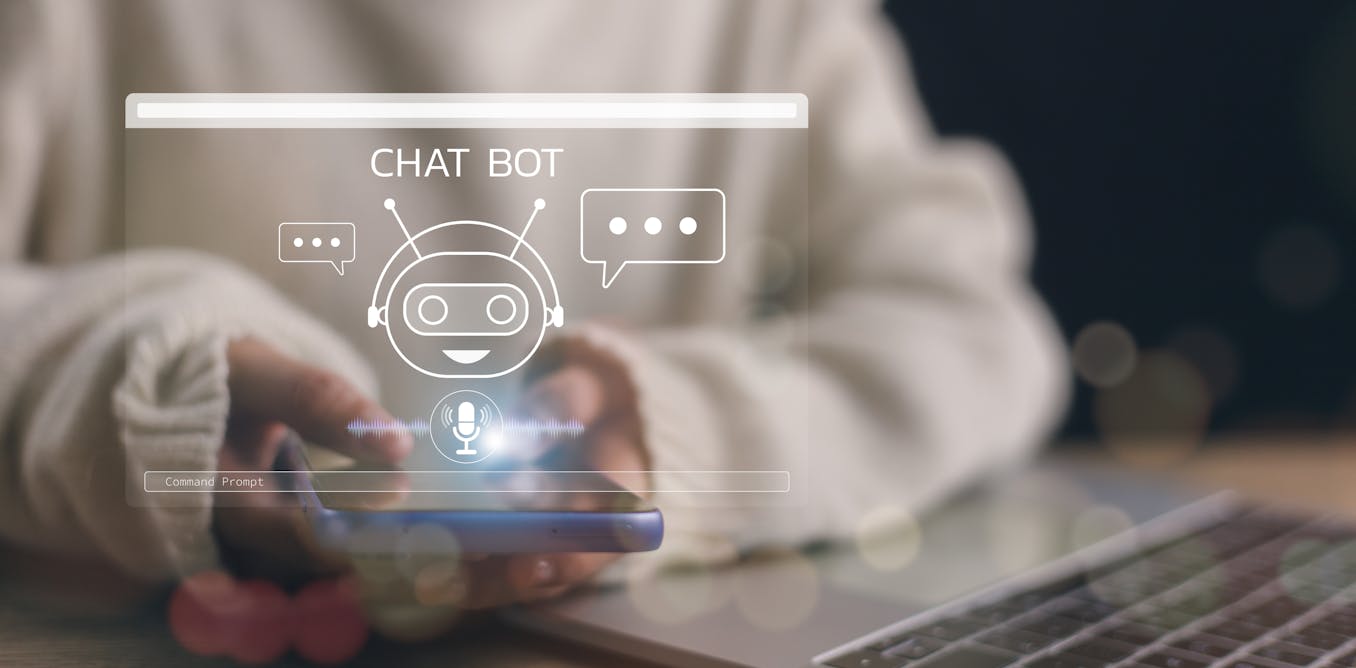

Artificial intelligence (AI) is rapidly transforming education, with schools and universities increasingly experimenting with AI chatbots to assist students in self-directed learning.

These digital assistants offer immediate feedback, answer questions and guide students through complex material. For teachers, the chatbots can reduce their workload by helping them provide scalable and personalised feedback to students.

But what makes an effective AI teaching assistant? Should it be warm and friendly or professional and competent? What are the potential pitfalls of integrating such technology into the classroom?

Our ongoing research explores student preferences, highlighting the benefits and challenges of using AI chatbots in education.

Warm or competent?

We developed two AI chatbots – John and Jack. Both chatbots were designed to assist university students with self-directed learning tasks but differed in their personas and interaction styles.

Author Supplied

John, the “warm” chatbot, featured a friendly face and casual attire. His communication style was encouraging and empathetic, using phrases like “spot on!” and “great progress! Keep it up!”.

When students faced difficulties, John responded with support: “It looks like this part might be tricky. I’m here to help!” His demeanour aimed to create a comfortable and approachable learning environment.

Author Supplied

Jack, the “competent” chatbot, had an authoritative appearance with formal business attire. His responses were clear and direct, such as “correct” or “good! This is exactly what I was looking for.”

When identifying problems, he was straightforward: “I see some issues here. Let’s identify where it can be improved.” Jack’s persona was intended to convey professionalism and efficiency.

We introduced the chatbots to university students during their self-directed learning activities. We then collected data through surveys and interviews about their experiences.

Distinct preferences

We found there were distinct preferences among the students. Those from engineering backgrounds tended to favour Jack’s straightforward and concise approach. One engineering student commented:

Jack felt like someone I could take more seriously. He also pointed out a few additional things that John hadn’t when asked the same question.

This suggests a professional and efficient interaction style resonated with students who value precision and directness in their studies.

Other students appreciated John’s friendly demeanour and thorough explanations. They found his approachable style helpful, especially when grappling with complex concepts. One student noted:

John’s encouraging feedback made me feel more comfortable exploring difficult topics.

Interestingly, some students desired a balance between the two styles. They valued John’s empathy but also appreciated Jack’s efficiency.

The weaknesses of Jack and John

While many students found the AI chatbots helpful, several concerns and potential weaknesses were highlighted. Some felt the chatbots occasionally provided superficial responses that lacked depth. As one student remarked:

Sometimes, the answers felt generic and didn’t fully address my question.

There is also a risk of students becoming too dependent on AI assistance, potentially hindering the development of critical thinking and problem-solving skills. One student admitted:

I worry that always having instant answers could make me less inclined to figure things out on my own.

The chatbots also sometimes struggled with understanding the context or nuances of complex questions. A student noted:

When I asked about a specific case study, the chatbot couldn’t grasp the intricacies and gave a broad answer.

This underscored AI’s challenges in interpreting complex human language and specialised content.

Privacy and data security concerns were also raised. Some students were uneasy about the data collected during interactions.

Additionally, potential biases in AI responses were a significant concern. Since AI systems learn from existing data, they can inadvertently perpetuate biases present in their training material.

Future-proofing classrooms

The findings highlight the need for a balanced approach in incorporating AI into education. Offering students options to customise their AI assistant’s persona could cater to diverse preferences and learning styles. Enhancing the AI’s ability to understand context and provide deeper, more nuanced responses is also essential.

Human oversight remains crucial. Teachers should continue to play a central role, guiding students and addressing areas where AI falls short. AI should be seen as a tool to augment, not replace, human educators. By collaborating with AI, educators can focus on fostering critical thinking and creativity, skills AI cannot replicate.

Another critical aspect is addressing privacy and bias. Institutions must implement robust data privacy policies and regularly audit AI systems to minimise biases and ensure ethical use.

Transparent communication about how data is used and protected can alleviate student concerns.

The nuances of AI in classrooms

Our study is ongoing, and we plan to expand it to include more students across different courses and educational levels. This broader scope will help us better understand the nuances of student interactions with AI teaching assistants.

By acknowledging both the strengths and weaknesses of AI chatbots, we aim to inform the development of tools that enhance learning outcomes while addressing potential challenges.

The insights from this research could significantly impact how universities design and implement AI teaching assistants in the future.

By tailoring AI tools to meet diverse student needs and addressing the identified issues, educational institutions can leverage AI to create more personalised and effective learning experiences.

This research was completed with Guy Bate and Shohil Kishore. The authors would also like to acknowledge the support of Soul Machines in providing the AI technology used in this research.

The post “Warm and friendly or competent and straightforward? What students want from AI chatbots in the classroom” by Shahper Richter, Senior Lecturer in Digital Marketing, University of Auckland, Waipapa Taumata Rau was published on 11/25/2024 by theconversation.com