Anthropomorphism occurs when we attribute human characteristics to non-human entities like animals or machines. Chatbots, such as ChatGPT, Gemini and Copilot, encourage this by imitating human language to communicate with us, going beyond using mere familiar words and phrases to adopt human patterns of communication. By doing this, they are able to hold contextualised, coherent conversations, and can even show emotions like humour and empathy.

These systems use language that aims to make interaction with them feel natural, fluent and accessible. It facilitates their use in different situations, from customer service to education and entertainment.

Until now, language was considered to be a uniquely human ability. However, advances in generative artificial intelligence (AI), which powers these chatbots, are forcing us to reconsider this idea.

One of the most distinctive traits of human language is subjectivity, which manifests in words or expressions that convey emotional nuance, as well as the expression of personal opinions. It also includes forming opinions about events, and the use of contextual or cultural elements.

Wikimedia Commons, CC BY

The first person: “I” and “We”

One typical feature of human subjectivity in language is the use of personal pronouns. The first person – “I” or “we” – allows us to express personal thoughts and experiences. The second person – “you” – engages with the other person, building a relationship between the two participants in a conversation. This is known as intersubjectivity.

Let’s look at a relatively straightforward example:

-

User: “I’m organising my home. How can I decide which items to keep, donate or throw away?”

-

Chatbot: “Great question! Organising your belongings can be overwhelming, but with a clear strategy you can make these decisions easier. Here are some ideas for deciding what to keep, donate or throw away.”

The chatbot uses the first person implicitly. While it doesn’t say “I” or “me”, it takes on the role of an advisor or guide, and phrases like “here are some ideas” present the ideas as the chatbot’s own.

The chatbot assumes the role of the helper, meaning the user feels like they are being addressed personally, even if the first person is not used explicitly. Furthermore, the use of “here are” reinforces the image of the chatbot as someone who offers something of value.

Read more:

We asked ChatGPT for legal advice – here are five reasons why you shouldn’t

The second person: the power of “you”

“You” (and its other forms like “your”) directly address the user. We can see this in several parts of the previous example, like the phrase “organising your belongings” and “you can make these decisions easier”.

By talking to you in a personal way, the chatbot aims to make the reader feel like they are playing an active part in the conversation, and this kind of language is common in texts that seek to make another person feel actively involved.

Other phrases, such as “Great question!”, not only give a positive impression of the user’s request, they also encourage them to engage. Phrases like “organising your belongings can be overwhelming” suggest a shared experience, creating an illusion of empathy by acknowledging the user’s emotions.

Read more:

ChatGPT is now better than ever at faking human emotion and behaviour

Artificial empathy

The chatbot’s use of the first person simulates awareness and seeks to create an illusion of empathy. By adopting a helper position and using the second person, it engages the user and reinforces the perception of closeness. This combination generates a conversation that feels human, practical, and appropriate for giving advice, even though its empathy comes from an algorithm, not from real understanding.

Getting used to interacting with non-conscious entities that simulate identity and personality may have long term repercussions, as these interactions can influence our personal, social and cultural lives. As these technologies improve, it will get harder and harder to distinguish a conversation with a real person from one with an AI system.

This increasingly blurred boundary between the human and the artificial affects how we understand authenticity, empathy and conscious presence in communication. We may even come to address AI chatbots as if they were conscious beings, generating confusion about their real capabilities.

Struggling to talk to other humans

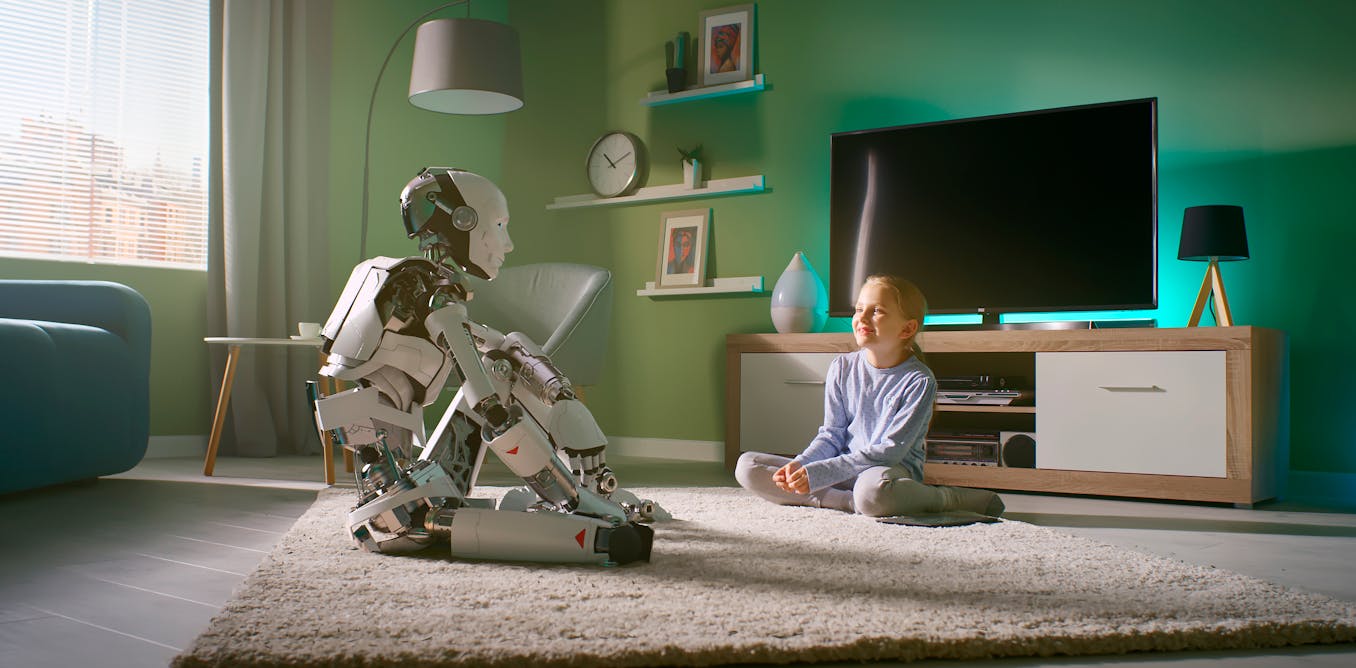

Interactions with machines can also change our expectations of human relationships. As we become accustomed to quick, seamless, conflict-free interactions, we may become more frustrated in our relationships with real people.

Human interactions are coloured by emotions, misunderstandings and complexity. In the long run, repeated interactions with chatbots may diminish our patience and ability to handle conflict and accept the natural imperfections in interpersonal interactions.

Furthermore, prolonged exposure to simulated human interaction raises ethical and philosophical dilemmas. By attributing human qualities to these entities – such as the ability to feel or have intentions – we might begin to question the value of conscious life versus perfect simulation. This could open up debates about robot rights and the value of human consciousness.

Interacting with non-sentient entities that mimic human identity can alter our perception of communication, relationships and identity. While these technologies can offer greater efficiency, it is essential to be aware of their limits and the potential impacts on how we interact, both with machines and with each other.

The post “ChatGPT’s artificial empathy is a language trick. Here’s how it works” by Cristian Augusto Gonzalez Arias, Investigador, Universidade de Santiago de Compostela was published on 11/27/2024 by theconversation.com