While AI technology is new, information warfare is as old as conflict itself. For millennia, humans have used propaganda, deception and psychological operations to influence adversaries’ decision-making and morale. In the 13th century, for instance, the Mongols destroyed entire cities just so word of mouth would spread to the next, with the goal of breaking morale and forcing it to capitulate before troops even arrived.

As technology has progressed, it has opened new frontiers in information warfare. From the Second World War to the 1991 Gulf War, planes dropped leaflets to spread rumours and propaganda. During the Vietnam War, English-language radio shows presented by Hanoi Hannah (real name Trịnh Thị Ngọ) taunted US troops with lists of their locations and casualties to lower morale. Radio propaganda also demonstrated its devastating effect when it was used to guide the Rwandan Genocide in 1994.

Cable TV came next. The 1991 Gulf War was the first major conflict broadcast on a 24 hour news cycle as opposed to the evening news. Instead of daily updates in bulletins or newspapers, people at home began receiving a continuous stream of information and images that was invariably biased towards national interests. This technological shift defined public perceptions of the war, and led historians to dub it the “CNN War”.

What we are witnessing today is the next step in this evolution – from print, radio and TV to social media. If the First Gulf War was the CNN war, the 2025 and 2026 conflict between the US, Israel and Iran can be thought of as the first TikTok War, and the first major AI War.

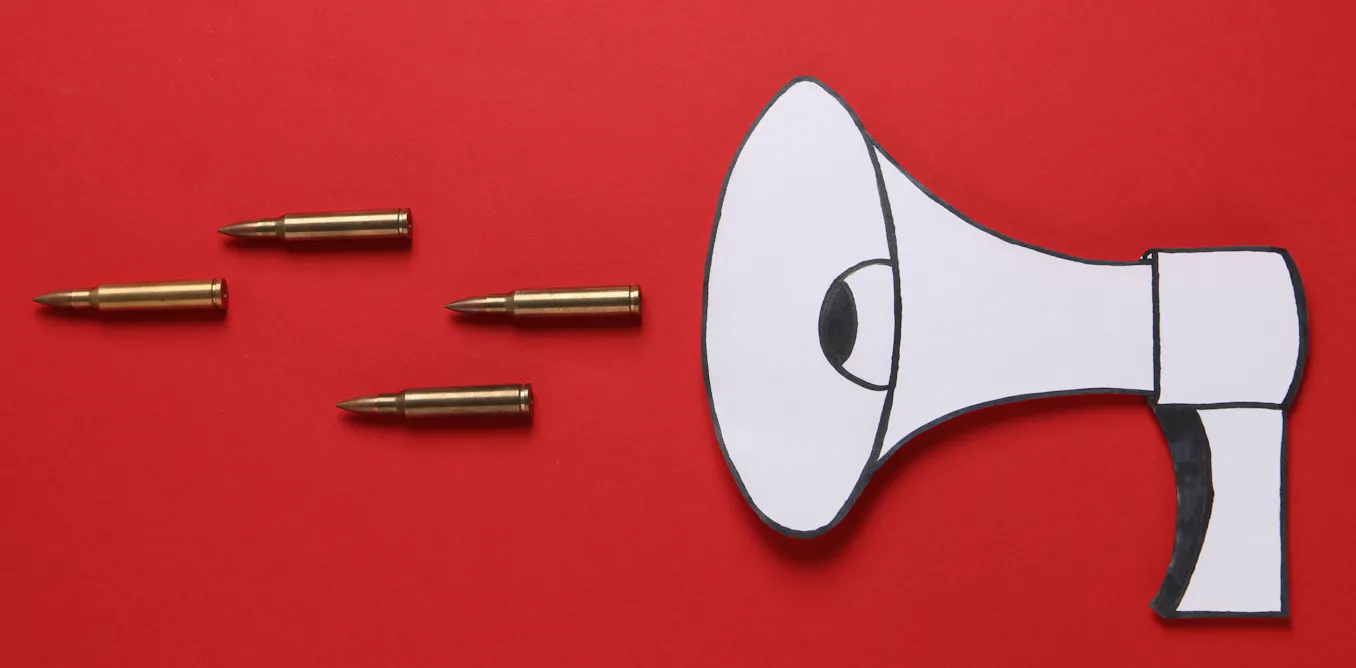

AI has ushered in new forms of information warfare that target perceptions, information environments, and trust itself. AI-generated videos in particular have fundamentally altered how states and non-state actors wage information warfare, manipulate populations, and compete not only in the Gulf, but in a global arena.

This “synthetic media” is frequently deployed and spread to falsify footage of real-world events – from devastating military attacks that never really happened to fake videos of officials pleading for a ceasefire.

But this technology also convincingly and easily creates propaganda material that is obviously fiction. The most notable example is Iran’s viral Lego videos that have repeatedly – and very successfully – mocked Israel and the US throughout the war.

Read more:

Slopaganda wars: how (and why) the US and Iran are flooding the zone with viral AI-generated noise

Digital weapons

To fully understand the disruptive potential of AI videos, we can go back and look at the futurist speculation of dystopian science fiction novels. Science fiction author William Gibson coined the term “cyberspace” in his 1983 novel Neuromancer, describing it as a “consensual hallucination” – not reality, but rather a “graphic representation of data abstracted from banks of every computer in the human system”.

But when digital tools like AI videos and social media are used as weapons, the barrier between cyberspace and physical reality becomes permeable. They no longer create virtual reality, but what French media theorist Jean Baudrillard called “hyperreality”. This term describes a state in which the distinction between reality and a simulation of reality collapses, where the simulation feels “more real than real”.

Bauldrillard’s work is underpinned by the concept of “simulacra”: copies or representations of something that really exists. He classified simulacra in three orders. The first order is the pre-industrial counterfeit – a faithful copy or replica of a real object – while the second is the mechanically mass-produced object.

Third order simulacra are simulations, or signs with absolutely no physical form. Take Iran’s Lego videos, which depict scenes such as Trump and Netanyahu using the Iran War as a pretext to distract from the Epstein files while worshipping the pagan Canaanite deity Baal. They have nothing to do with the intentions of the Danish company that makes the ubiquitous plastic brick toys, and yet they have gained enormous traction as viral meme propaganda – both in the West and around the world.

AI is the message

Media theorist Marshall McLuhan’s oft-quoted phrase “the medium is the message” argues that, irrespective of the messages transmitted by media – be it newspaper, radio or TV – the medium in and of itself also tells us something.

The content of Iranian, US and Israeli AI videos are, naturally, entirely different, as each seeks to undermine their opponents’ narratives. But the medium of AI videos shared on social media also sends a message: these videos transcend an adversary’s borders in ways that previous media could not.

Unlike the pamphlets, radio broadcasts and TV networks of before, AI’s production and consumption are geographically unbound. Anyone can make and view it anywhere – whether in Tehran, Tel Aviv, Washington or anywhere else in the world. What this has created is a new era of borderless, decentralised, viral, digital public diplomacy.

Read more:

Iran’s AI memes are reaching people who don’t follow the news – and winning the propaganda war

Deepfakes, propaganda and ‘truth decay’

Unlike Iran’s Lego videos, AI deepfakes are realistic but entirely fabricated content, making it difficult for viewers to discern truth from falsehood. Early iterations were crude and easily identifiable, but modern deepfakes have reached a level of photorealism and vocal authenticity that can deceive even experienced observers and automated detection systems.

During the so-called “12-Day War” in 2025 in Israel and Iran, AI deepfakes and video game footage sought to replicate real combat. Fabricated visuals included scenes of destroyed Israeli aircraft, collapsing buildings in Tel Aviv and its airport, while others showed Israeli strikes on Tehran that left a crater in an intersection and sent cars flying.

But believability isn’t always paramount. One widely-shared image of a downed Israeli F-35 fighter was taken from a flight simulator game. The plane was obviously too large compared to the bystanders on the ground, but this didn’t stop the image from going viral (it got 23 million views on TikTok) or from being spread by networks sympathetic to Russia seeking to demonstrate the vulnerability of American-made aircraft.

In total, the three most viewed deepfake videos during the 2025 war received 100 million views across social media. One deepfake video that circulated on Facebook even depicted Israeli officials pleading for the US to enforce a ceasefire, claiming “we cannot fight Iran any longer”.

This content was disseminated on TikTok, Telegram and X, where the AI chatbot Grok failed to identify fabricated videos that used footage from other conflicts.

Legal scholars have coined the phrases “liar’s dividend” and “truth decay” to characterise this ongoing trend towards fabricating reality. These terms refer to a media landscape where AI-driven fakes cast even legitimate evidence into doubt, eroding trust to the point where any image or medium can now be dismissed as a deepfake.

The most recent 2025 to 2026 wars demonstrate that, as states race to develop drones, missiles and defence systems, a parallel arms race is unfolding online. The digital revolution, coupled with advances in AI, has exponentially increased the speed, scale and sophistication of information manipulation. This conflict heralds a new era of information warfare, one where AI technologies are weaponised to influence, disrupt and destabilise adversaries.

A weekly e-mail in English featuring expertise from scholars and researchers. It provides an introduction to the diversity of research coming out of the continent and considers some of the key issues facing European countries. Get the newsletter!

The post “the new frontier of information warfare” by Ibrahim Al-Marashi, Adjunct Professor, IE School of Humanities, IE University; California State University San Marcos was published on 04/22/2026 by theconversation.com