Artificial intelligence (AI) is a powerful tool.

In the hands of public police and other criminal justice agencies, AI can lead to injustice. For example, Detroit resident Robert Williams was arrested in front of his children and held in detention for a night after a false positive in an AI facial recognition system. Williams had his name in the system for years and had to sue the police and local government for wrongful arrest to get it removed. He eventually found out that faulty AI had identified him as the suspect.

Around the corner, the same thing happened to another Detroit resident, Michael Oliver, and in New Jersey, to Nijeer Parks. These three men have two things in common. One, they are all victims of false positives in AI facial recognition systems. And two, they are all Black men.

It turns out: AI facial recognition systems cannot tell most people of colour apart. According to one study, the error rate is the highest for Black women, at 35 per cent.

These examples reveal the critical issues at stake with using AI in policing and law, especially in this moment, when AI is being used in the criminal justice system and in public and private sectors more than ever before.

In Canada: New laws, old problems

Currently, two new laws with major implications for the use of AI for years to come are being considered in Canada. Both lack protections for the public when it comes to police use of AI. As scholars who study computer science, policing and law, we are troubled by these gaps.

In Ontario, Bill 194, or the Strengthening Cyber Security and Building Trust in the Public Sector Act, is focused on AI use in the public sector.

The federal Bill C-27 would enact the Artificial Intelligence and Data Act (AIDA). Although the focus of AIDA is the private sector, it has implications for the public sector because of the high number of public-private partnerships in government.

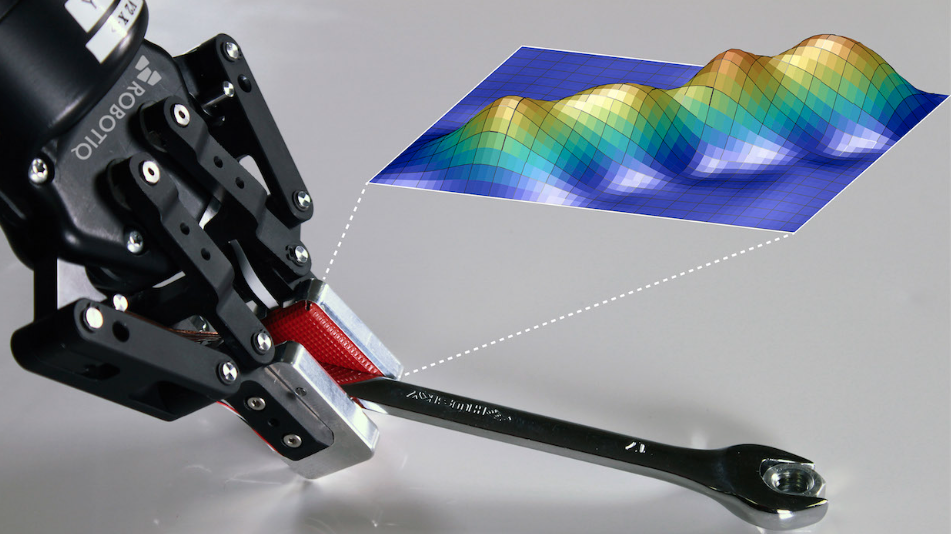

Public police use AI as owners and operators of AI. They can also contract a private sector agency as a proxy to conduct AI-driven analyses.

Because of this public use of private-sector AI, even laws intended to regulate private sector use of AI should offer rules of engagement for criminal justice agencies using the technology.

(AP Photo/Elaine Thompson)

Racial profiling and AI

AI has powerful predictive capabilities. Using machine learning, AI can be fed a database of profiles to “determine” the probability of who might do what or match faces to profiles. AI might also determine where police patrols are directed to based on past crime data.

These techniques sound as if they might boost efficiency or reduce bias. However, police use of AI can increase racial profiling and increase unnecessary police deployments.

Civil liberties and privacy groups have written reports on AI and surveillance practices. They provide examples of racial bias from places where police use AI technology. And they point to the many false arrests.

In Canada, the Royal Canadian Mounted Police (RCMP) and other policing agencies, including the Toronto Police Service and the Ontario Provincial Police, have already been called out by the Office of the Privacy Commissioner of Canada for using the Clearview AI technology to conduct mass surveillance.

Clearview AI has a database of over three billion images that were collected without consent by scraping the internet. Clearview AI matches faces from the database against other footage. This violates Canadian privacy laws. The Office of the Privacy Commissioner of Canada has critiqued RCMP use of this technology and the Toronto Police Services suspended use of that product.

By leaving the regulation of law enforcement out of Bill 194 and Bill C-27, AI companies in Canada could enable similar mass surveillance.

Read more:

How police surveillance technologies act as tools of white supremacy

The EU leads the way

Internationally, there have been gains in getting AI use regulated in the public sector.

So far, the European Union’s AI Act is the best piece of law in the world when it comes to protecting the privacy and civil liberties of its citizens.

The EU’s AI Act takes a risk-and-harms-based approach to the regulation of AI, expecting that users of AI must take concrete steps to protect personal information and prevent mass surveillance.

In contrast, both Canadian and U.S. laws pit the rights of citizens to be free from mass surveillance against the desire of corporations to be efficient and competitive.

Still time to make changes

There is still time to make changes. Bill 194 is being debated by the Ontario Legislative Assembly. And Bill C-27 is being debated in the Canadian Parliament.

Leaving police and criminal justice agencies out of Bill 194 and Bill C-27 is a glaring oversight. It potentially brings justice into disrepute in Canada.

The Law Commission of Ontario has critiqued Bill 194. They say the proposed law does not promote human rights or privacy, and would allow the unhindered use of AI in ways that could upset the privacy of Canadians. They say Bill 194 would allow public bodies to use AI in secret, arguing that Bill 194 ignores AI use by police, jails, courts and other criminal justice agencies.

Regarding Bill C-27, the Canadian Civil Liberties Association (CCLA) has issued a cautionary note and has petitioned for the bill to be withdrawn. They say the regulatory measures in Bill C-27 are geared toward enhancing private sector productivity and data mining rather than protecting the privacy and civil liberties of Canadian citizens.

Given that police and national security agencies often work with private providers in surveillance and security intelligence activities, regulations are needed to cover such partnerships. But police and national security agencies are not mentioned in Bill C-27.

The CCLA recommends Bill C-27 be harmonized with the European Union’s AI Act to include guard rails preventing mass surveillance and protecting against abuses of the power of AI.

These will be Canada’s first AI laws. We are years behind where regulation needs to be to prevent abuses of AI use in the public and private sectors.

During this time of growth in the use of AI by criminal justice agencies, changes must be made to Bill 194 and Bill C-27 now to protect Canadian citizens.

The post “AI used by police cannot tell Black people apart and other reasons Canada’s AI laws need urgent attention” by Kevin Walby, Associate Professor of Criminal Justice, University of Winnipeg was published on 08/25/2024 by theconversation.com